Last week AI frontrunner OpenAI released a version of its GPT large language model (LLM) that allows paid users to train the AI on closed sets of data and create a bespoke chatbot. That is, you feed the model your own data, and it spits out a GPT custom-built to respond to queries about the data.

Seeking a means of testing this new ability, I gathered the collected text of these newsletters (around 40k words), uploaded them, and created the Polemos Newsletter Advisor, which you can find and use here.

Is it useful?

- The GPT is inconsistent: sometimes, you get the feeling it has digested the information and understood. For example, I asked it to come up with a 20-question exam based on the newsletters, and it did a pretty good job. I forgot to ask it for answers, and it supplied them all (seemingly correct) in a subsequent query.

- The AI-made newsletter exam was superficially plausible, and definitely some of the questions were based on the newsletters. But it also mixed in general issues drawn from its general data set that I have never considered.

- For example “What issues does the author raise about cryptocurrency mining?” Its correct answer was “environmental concerns”, but I have never discussed this as far as I can remember. It’s just a common theme in crypto writing.

I did some other interesting things with the data – for example, asking the AI to harshly critique the columns, and to write a column in my style about asking an AI to write a column in my style.

The first assignment, the critique, contained this zinger: “There’s a fine line between being educated and being a know-it-all, and these columns dance on that line with the grace of an elephant in a china shop.”

To sign up to this newsletter, enter your email, tick the box and click subscribe!

Aside from that, the AI seemed incapable of really being nasty. Most of it was actually complimentary. For example, it contained this phrase in the summary: “while these columns are undoubtedly informative and occasionally thought-provoking …”

The second assignment, the column it wrote in my style, was truly horrible. It was close enough to how I write to be uncanny, but suffered from having no new information and being riddled with cliches. If I compare the two, the AI’s earnest attempt to mimic the newsletter was much more damning than the “harsh critique”.

Try out the Advisor and see for yourself. At this point, it’s a classic case of the GPTs: it mixes some insight with a big serving of linguistic pap, with no reliability. You couldn’t use this without manually checking the output.

The big however

Once the underlying GPT engine improves, and the AI becomes better at restricting itself to the supplied data set, this thing is going to be everywhere. Literally everywhere. There is almost no human endeavour that doesn’t rely on some kind of data set specific to that business, and the ability to make your own ring-fenced natural-language interface for that data is a step-change.

There’s another significant thing here, and to me it’s more important than the GPT assistant itself: it’s how I built the Polemos Newsletter Advisor in the first place.

There was no “settings page”, coding or step-by-step process. Instead, you use the GPT interface itself to make the GPT. It asks you what you want, you tell it. Then it asks you to upload the documents. If you need to modify the behaviour or functionality of the bot, you tell it in natural language. For example, I wasn’t happy at first with how generic the Advisor was in its answers, and I told it to restrict itself only to the documents I supplied. It improved a lot at that point (while still being flawed).

Bill Gates wrote this week that “AI is about to completely change how you use computers”, and he is right.

“You’ll simply tell your device, in everyday language, what you want to do.”

Because it’s from Gates, and because it concerns the now old-fashioned topic of computer-human interfaces, this doesn’t feel all that revolutionary.

But it’s going to be massive, in the same way that smart phones were massive.

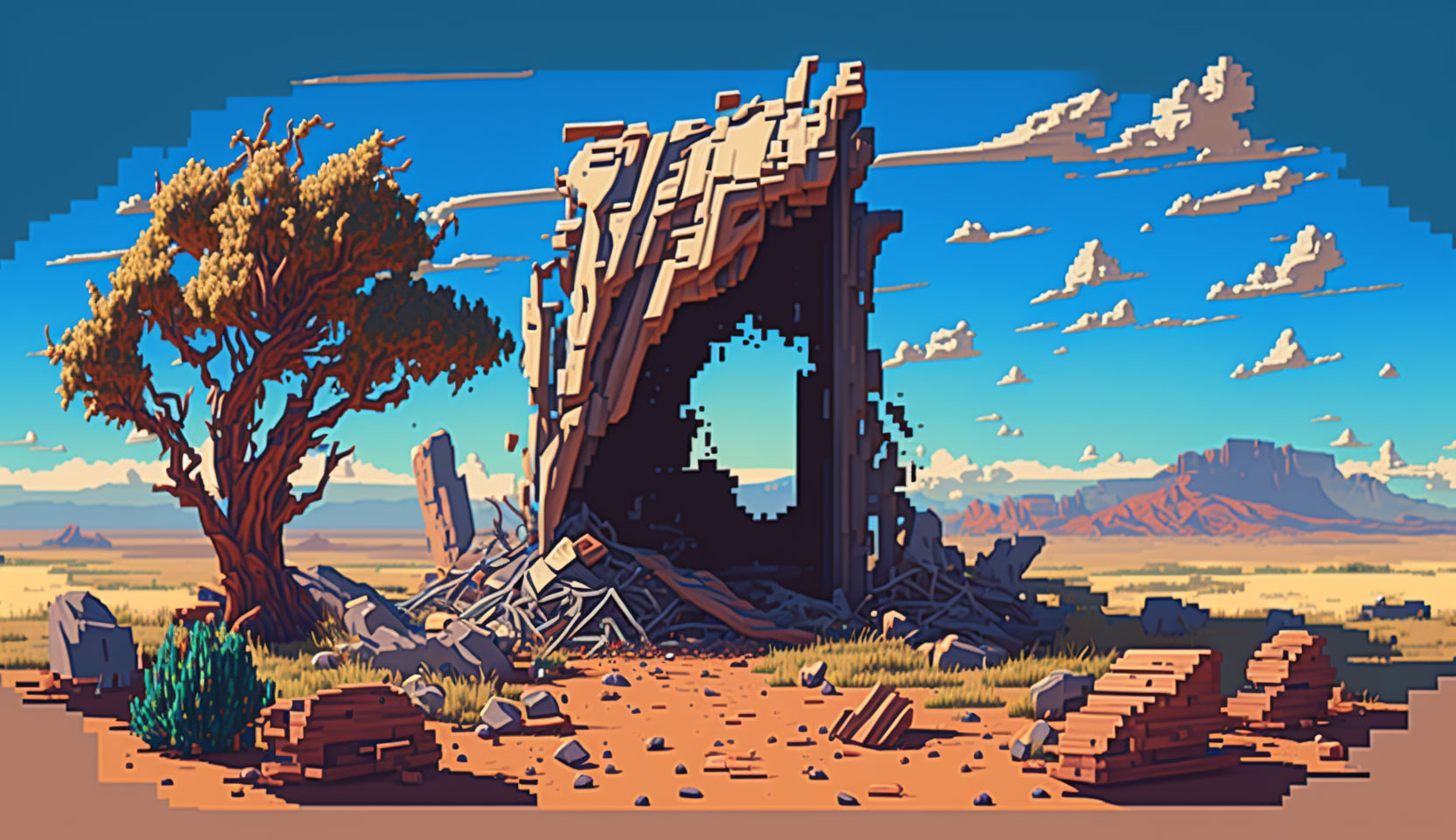

Bazooka Tango cash injection

I wrote last week that things are looking up for blockchain games – despite the employment carnage in the wider game industry – and this week I’ve got some more good news: Bazooka Tango, developer of Shardbound, received an additional $5m in funding. The funding round was led by Bitkraft Ventures.

I spoke to Bazooka founder and CEO Bo Daly a month back on the Key Characters podcast, and he mentioned then his funding philosophy:

“Raising money for games is hard, fundamentally because it is a content business and it has a ton of risk.”

“We didn’t raise money for Shardbound from the community. We’ve tried to stay away from doing pre-sale and going that direction. Players have identified that as a risk to them. We don’t want to create a situation where the people that are backing us might be left holding the bag.”

Listen to the full interview here.

New content from Polemos

We have just launched a Polemos_News YouTube channel, where we post video news every weekday. Check it out.